Introduction

The High-Throughput Computing (HTC) service of the SCIGNE platform is based on a computing cluster optimised for data-parallel task processing. This cluster is connected to a larger infrastructure, the EGI European Computing Grid.

Although the recommended way to interact with the HTC service is to use the DIRAC software, it is also possible to use directly the Computing Element (CE) endpoint of SCIGNE for managing your jobs. The CE software installed at SCIGNE is the ARC CE.

After an introduction about grid computing, this documentation describes the submission, monitoring and output retrieval of your computations with the ARC CE client.

The Computing Grid

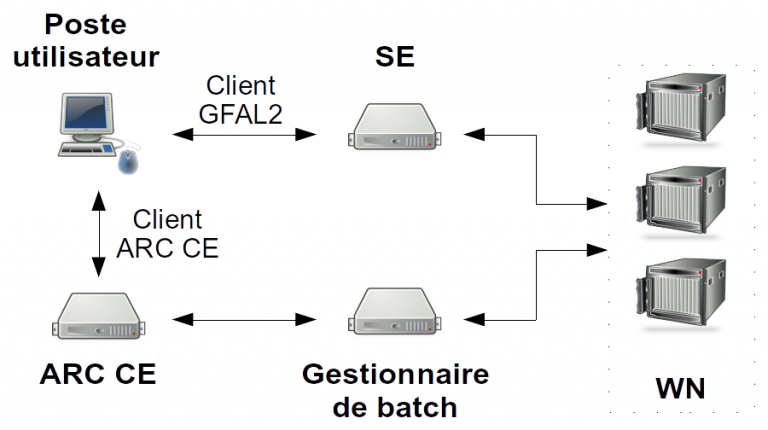

This section presents the different services used when submitting a job on the computing grid. Interactions between these services during the job workflow is illustrated in the following figure. The acronyms are detailed in the table The main services of the computing grid.

In this example, the user manages jobs directly by interacting with the ARC CE server, without using DIRAC. Before submitting the job, large data (> 10 MB) must be copied to the Storage Element (SE). The usage of the SE is detailed in the storage user guide. When the job is submitted, the ARC CE takes it in charge and creates a job task on the local batch scheduler of the SCIGNE platform (pbs). The batch scheduler distributes the jobs to the Worker Nodes (WN). Data sets copied to the SE are accessible from the WN. Once the job is completed and the results copied to the SE, the CE collects execution information and makes them available to the user.

During job execution, the user can query the ARC CE to know the status of a job.

| Element | Role |

|---|---|

|

The UI (User Interface) is the workstation where the ARC CE client is installed. It permits to:

|

| SE | The SE (Storage Element) is the grid element that manages storage. It allows to copy large data sets and to make them available to the worker nodes. It is reachable through different protocols (https, xrootd, etc). |

| ARC CE | The ARC CE (Computing Element) is the server interacting with the batch scheduler. It allows to submit jobs to the local cluster. |

| WN | The WN (Worker Node) is the server where the computation is performed. It connects to the SE for retrieving required data sets and to store output data. |

Prerequisites

In order to submit a job to an ARC CE, the following two prerequisites are necessary:

- to have a workstation equipped with the ARC CE client;

- to have a valid certificate registered in a virtual organisation (VO). The document Managing a certificate details how to get a certificate and to enrol in a local, regional or disciplinary VOs.

ARC CE Client

Jobs are managed using the ARC CE client, which is a command line interface software.

If you have an account at IPHC, you can simply use the UI server, as the ARC CE client is already installed there.

It is also possible to simply install the ARC CE client on your GNU/Linux workstation:

- With Ubuntu:

$ sudo apt install nordugrid-arc-client - With RedHat and its derivatives (i.e. CentOS):

$ yum install -y epel-release $ yum update $ yum install -y install nordugrid-arc-client

Certificate

The private and public part of your certificate needs to be placed into the $HOME/.globus directory on the server or the workstation you want to use to submit your jobs. These files should only be readable by their owner:

$ ls -l $HOME/.globus

-r-------- 1 user group 1935 Feb 16 2010 usercert.pem

-r-------- 1 user group 1920 Feb 16 2010 userkey.pemIn this documentation, we are using the vo.grand-est.fr VO, which is the regional VO. You should replace the name of this VO by the one you are using (i.e. biomed or vo.sbg.in2p3.fr).

Job Management with the ARC CE Client

Proxy generation

Before submitting a job to the computing grid, it is required to generate a valid proxy. This temporary certificate allows you to authenticate with all grid services. It is generated with the following command:

$ arcproxy -S vo.grand-est.fr -c validityPeriod=24h -c vomsACvalidityPeriod=24The -S option allows to select the VO.

The following command shows the validity of your proxy:

$ arcproxy -I

Subject: /O=GRID-FR/C=FR/O=CNRS/OU=IPHC/CN=Jerome Pansanel/CN=1544387362

Issuer: /O=GRID-FR/C=FR/O=CNRS/OU=IPHC/CN=Jerome Pansanel

Identity: /O=GRID-FR/C=FR/O=CNRS/OU=IPHC/CN=Jerome Pansanel

Time left for proxy: 18 hours 29 minutes 58 seconds

Proxy path: /tmp/x509up_u1001

Proxy type: X.509 Proxy Certificate Profile RFC compliant impersonation proxy - RFC inheritAll proxy

Proxy key length: 1024

Proxy signature: sha256

====== AC extension information for VO vo.sbg.in2p3.fr ======

VO : vo.grand-est.fr

subject : /O=GRID-FR/C=FR/O=CNRS/OU=IPHC/CN=Jerome Pansanel

issuer : /O=GRID-FR/C=FR/O=CNRS/OU=LAL/CN=grid12.lal.in2p3.fr

uri : grid12.lal.in2p3.fr:20018

attribute : /vo.grand-est.fr/Role=NULL/Capability=NULL

Time left for AC: 18 hours 30 minutes 2 secondsJob submission

The submission of a job with the ARC CE client requires to use a file describing your job. This file is in the xRSL format. The xRSL file presented in the example below is fully functional and can be used to realise a simple job. The xRSL file is named myjob.xrsl in this document.

&

(executable = "/bin/bash")

(arguments = "myscript.sh")

(jobName="mysimplejob")

(inputFiles = ("myscript.sh" "") )

(stdout = "stdout")

(stderr = "stderr")

(gmlog="simplejob.log")

(wallTime="240")

(runTimeEnvironment="ENV/PROXY")

(count="1")

(countpernode="1")Each attribute of this example has a specific role:

- executable define the command to execute on the work node. It can be the name of a shell script.

- arguments specifies the arguments to pass to the program defined by the executable attribute; in this example

myscript.shis a bash script that will be passed as an argument to the bash command. - jobName defines the name of the job.

- inputFiles indicates the files to send to the ARC CE and needed for the computation. The use of “” after the name of the file indicates that the file is available locally, in the directory from which the ARC CE commands are executed.

- stdout is the name of the file where the standard output is stored.

- stderr incidicates the name of the file where the error output is stored.

- gmlog is the name of the directory containing diagnostic tools.

- wallTime is the maximum execution time for a job.

- runTimeEnvironment indicates the execution environment required by a job.

- count is an integer indicating the simultaneous number of executed task (this parameter is greater than 1 in case of MPI jobs).

- countpernode is an integer indicating how many tasks should run on a single server. If all tasks need to run on the same server, the value of the count and countpernode attributes should be identical.

A example of the myscript.sh script is detailled below:

#!/bin/sh

echo "===== Begin ====="

date

echo "The program is running on $HOSTNAME"

date

dd if=/dev/urandom of=fichier.txt bs=1G count=1

gfal-copy file://`pwd`/fichier.txt root://sbgdcache.in2p3.fr/vo.grand-est.fr/lab/name/fichier.txt

echo "===== End ====="Executables used in the myscript.sh script need to be installed on the grid. The installation of these software is carried out by the support team of the SCIGNE platform. Do not hesitate to contact it for any enquiry.

The gfal-copy command allows to copy files to a SE. It is recommanded to use the sbgdcache.in2p3.fr SE when you submit jobs at SCIGNE (it is the closest SE). The usage of the SE is described in the dedicated guide. Once the myjob.xrsl and myscript.sh files are created, the job can be submitted to the ARC CE with the following command:

$ arcsub -c sbgce2.in2p3.fr myjob.xrsl

Job submitted with jobid: gsiftp://sbgce2.in2p3.fr:2811/jobs/VnhGHkl9RUnhJKeJ7oqv78RnGBFKFvDGEDDmxDFKDmABFKDmnKeGDnThe -c option allows to select the ARC CE server.

The identifier of the job is stored in a local database: {HOME}/.arc/jobs.dat.

Monitoring job status

For monitoring the status of a job, the following command can be used with the job identifier:

$ arcstat gsiftp://sbgce2.in2p3.fr:2811/jobs/VnhGHkl9RUnhJKeJ7oqv78RnGBFKFvDGEDDmxDFKDmABFKDmnKeGDn

Job: gsiftp://sbgce2.in2p3.fr:2811/jobs/VnhGHkl9RUnhJKeJ7oqv78RnGBFKFvDGEDDmxDFKDmABFKDmnKeGDn

Name: mysimplejob

State: Finishing

Status of 1 jobs was queried, 1 jobs returned informationIn the case of a short job, you may have to wait some time before obtaining an updated status of the job.

Getting the output files

Once the job is finished (job status: Finished), the content of the output files, described by the stdout and stderr attributes, can be retrieved with:

$ arcget gsiftp://sbgce2.in2p3.fr:2811/jobs/VnhGHkl9RUnhJKeJ7oqv78RnGBFKFvDGEDDmxDFKDmABFKDmnKeGDnThe file are in the VnhGHkl9RUnhJKeJ7oqv78RnGBFKFvDGEDDmxDFKDmABFKDmnKeGDn directory.

Complementary Documentation

The reference below points to a documentation that gives a deeper view of the ARC CE client and the computing grid: